Knowledge graphs are dead for predictive graph analytics – long live dynamic knowledge graphs

Graph Analytics on Knowledge Graphs is currently hyped by many technology prediction companies. “Gartner predicts that by 2025, graph technologies will be used in 80% of data and analytics innovations, up from 10% in 2021” (Ref. 1) with a phenomenal growth at a CAGR of 32.64% from 2020 to 2027 and reaching a projected market size of USD 5.4 Billion by Verified Market Research (Ref. 2).

They are right that graph analytics has demonstrated its impact already in scientific publication interconnection, innovation and collaboration spotting, know your clients (KYC) in banking, marketing and many other applications. They are also right that we just start to use graph analytics to its full potential and continuously identify new applications. But many insights that are currently promised are not deliverable with the toolsets we currently have in hand. Dynamic Knowledge Graph (DKG) systems are one possibility to meet the high expectations raised by the promises.

Follow me for a second on a little journey. Read word by word, loud and slowly: spring :: temperature :: outside :: leaf :: strength :: energy :: coiled.

Did something happen at the last word? Did your understanding change from spring, the season, to spring, the mechanical helix that stores energy? A simple and at the same time highly complex switch of meaning, depending on if one is a human or a knowledge graph algorithm.

Already in the kindergarten little children learn about homophones (words with exact same pronunciation, but different meaning). This happens often in forms of jokes: >>What do you call a deer with no eyes? – No idea!<< and >>What do you call a deer with no eyes and no legs? – Still no idea!<<. >>No idea? or No eye deer?<< A little later with learning writing in school as humans we learn about homonyms (words with exact same pronunciation and same spelling, but different meaning).

>>bow<< is a classical example of a homonym. Depending on context it can have many multiple meanings, or as one would say in the studies of history of words: The different meanings of >>bow<< represent multiple etymologically separate lexemes.

- a weapon to shoot arrows

- a front of a ship

- a wooden stick with horse hair to play string instruments

- a tied ribbon

- bending forward at the waist

- bending outward at the sides

Some languages are less phonetic than others and have more homophones, to the benefit of stand-up comedians. The effects of homonyms on the learning of children have been studied (see Ref.3) and it is interesting to notice that several studies show positive learning effects even for other languages e.g. “Homophones facilitate lexical development in a second language” (see Ref.4-6).

If you never heard the >>no eye deer<< joke before, your brain delivered an enormous transformative performance by understanding the controversial, unexpected twist. I like jokes that create moments and sometimes seconds of confusion where in the best case I even have to read the jokes once again before the meaning twist kicks in. I (or my brain itself?) congratulate and reward myself for that good transferformational performance by laughing. The transformational process differs from person to person based on culture, field of expertise and prior knowledge.

With understanding the meaning of >>spring<< in the word by word list example from above, again your brain performed something that is hard to achieve with a knowledge graph without some extensions. Depending on your background, job, culture most probably you started with ‘the season’ or the ‘natural water source’ meaning. It is very unlikely that you belong to the very small minority of coil spring manufacturers or mechanical engineers which would have maybe started with the mechanical helix device. The next two words >>temperature<< and >>outside<< are compatible with all three meanings, but in general knowledge texts they are much more often associated with ‘the season’ meaning, followed by the ‘natural water source’ meaning and only very rarely with the ‘helix device’.

What happened at >>leaf<<? With this highly ‘the season’ meaning connected word, most probably your understanding was now hardened towards this meaning. Important at this point is that you might have only heard or seen once in your life about a >>leaf spring<< on old carriages or cars. Most probably you have not even an active memory about it, but if you see a picture, you will recall. For the ‘natural water source’ meaning certainly you can imagine a leaf floating on a source. >>leaf<< is not incompatible with any of the three meanings, even if at this point of time you even did not have the ‘helix device’ as an meaning option actively on your radar.

In common knowledge texts the next two words >>strength<< and >>energy<< are connected equally to the different meanings. And you can easily build a connection to the energizing or strengthening effect ‘the season’ or ‘the hot spring’ might have on people. Both words support the interpretation you are following at this stage, most probably the ‘season’ meaning.

>>coiled<< now is the game changer. Suddenly there is a conflict, there is an incompatibility with your current meaning interpretation. A transformative rethinking or reordering of information is required. Maybe you have spent a similar moment or second like when understanding a twisted joke. How this happens in our brains is subject to current research.

This transformational process that you managed easily, is not possible with the knowledge graph tools we have today.

Let us go back to information technology using knowledge graphs. If the number of concepts for >>spring<< that can be distinguished is established at the beginning of the learning, a knowledge graph can be used to distinguish between these meanings. But with a non-dynamic non-transactional knowledge graph, the transformative process of extending or reducing the number of concepts is triggered by external additional information or human correction, and is not a transactional learning process. In most cases this can be only resolved by establishing the new context split-up as external input and relearning the entire knowledge graph or at least a significant sub-graph of it.

Many Graph compute engines are non-transactional and provide read-only graph analytics. They come in node-centric and edge-centric shapes that have different advantages depending on the graph algorithm to perform. They are in contrast to graph databases that are transactional that scale better with graph size, but scale worse for complex graph analytics that need big parts of the graph and not just a limited sub-graph.

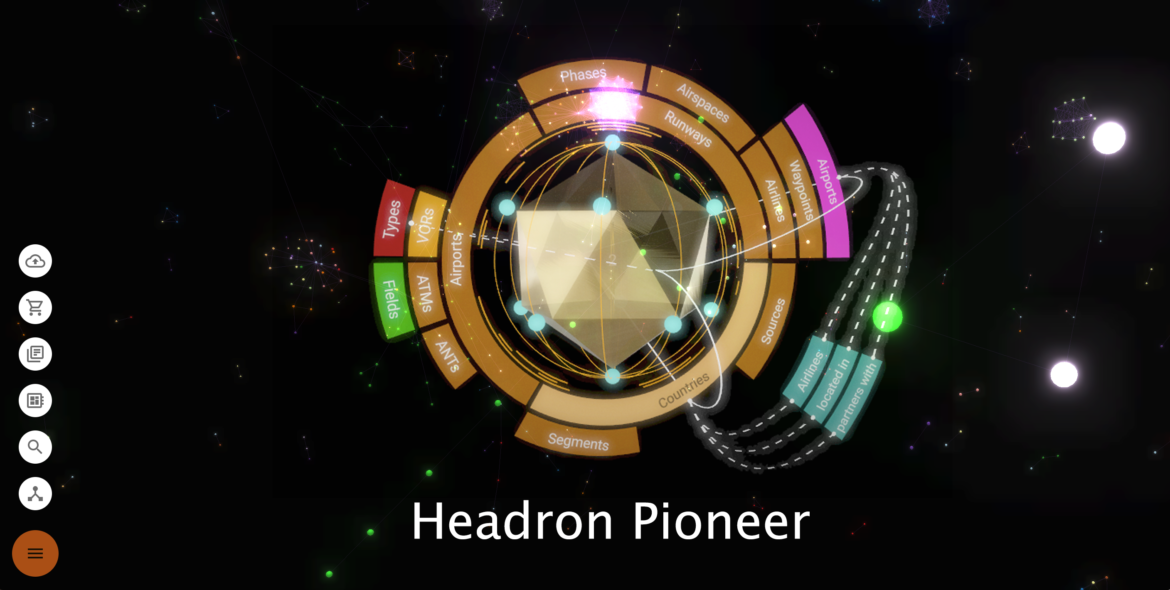

gluoNNet Headron is an in-memory, transactional, path-centric, dynamic knowledge graph analytics engine. By forked navigation histories, it allows to explore dynamically the different possible knowledge graph context projections of the ingested information fragments. It uses Human Readable Queries (HRC) to generate actionable insights for decision makers. It saves time and costs by accelerating the data analysis and decision iteration processes between executive decision makers and data analysis specialists. The gluoNNet Headron solution empowers them to accelerate and elevate the transformational process necessary to understand complex data and turn it into actionable insights.

For more information on our graph analytics and visualisation solutions, please contact Daniel Dobos or info@gluonnet.com.

References:

Ref. 1: Gartner, https://www.gartner.com/en/newsroom/press-releases/2021-03-16-gartner-identifies-top-10-data-and-analytics-technologies-trends-for-2021

Ref. 2: VerifiedMarketResearch, https://www.verifiedmarketresearch.com/product/graph-analytics-market/

Ref. 3: J Speech Lang Hear Res. 2013 Apr; 56(2): 694–707. Published online 2012 Dec 28. doi: 10.1044/1092-4388(2012/12-0122)

Ref. 4: Homophones facilitate lexical development in a second language, Jiang Liua and Seth Wiener,https://www.sciencedirect.com/science/article/abs/pii/S0346251X19306670

Ref. 5: The effect of homonymy on learning correctly articulated versus misarticulated words, Holly L. Storkel, Junko Maekawa, Andrew J. Aschenbrenner, https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3615102/

Ref. 6: The Effect of Semantic Similarity on Learning Ambiguous Words in a Second Language: An Event-Related Potential Study, Yuanyue Zhang, Yao Lu, Lijuan Liang and Baoguo Chen, https://www.frontiersin.org/articles/10.3389/fpsyg.2020.01633/full